Bridging the Gap: How Smart Demand Management Can Forestall the AI Energy Crisis

Frank Long is a Vice President at the Goldman Sachs Global Institute, where he focuses on AI.

George Lee is Co-Head of the Goldman Sachs Global Institute, where he focuses on AI.

- The AI Power BottleneckAI's insatiable power demand is outpacing the grid's decade-long development cycles, creating a critical bottleneck.

- Curtailment Unlocks Untapped PowerThe US grid has 76–126 GW of slack power that could potentially be accessed by companies that can pause demand during periods of peak strain lasting a few hours each.

- A Perfect MatchThe AI industry urgently demands immediate power for rapid scaling. Fortunately, for AI workloads it is much more viable to pause operations and leverage curtailment compared to traditional cloud workloads.

- From Grid Burden to Grid AssetThis transforms AI into a powerful grid ally—a "shock absorber" that boosts efficiency, monetizes idle infrastructure, and provides the grid responsiveness needed to pave the way for greater adoption of intermittent energy generation like solar and wind.

Crisis Becomes an Opportunity

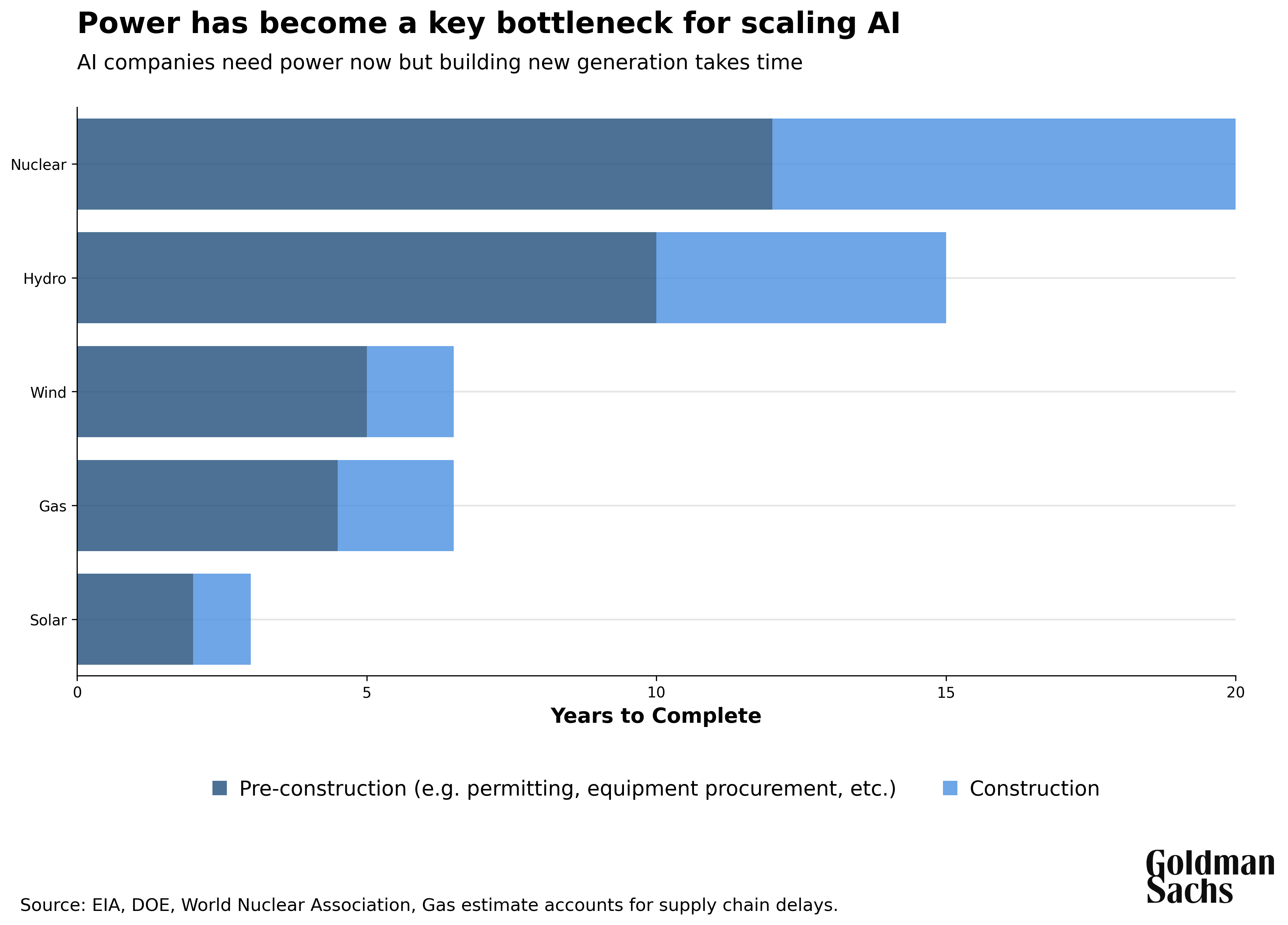

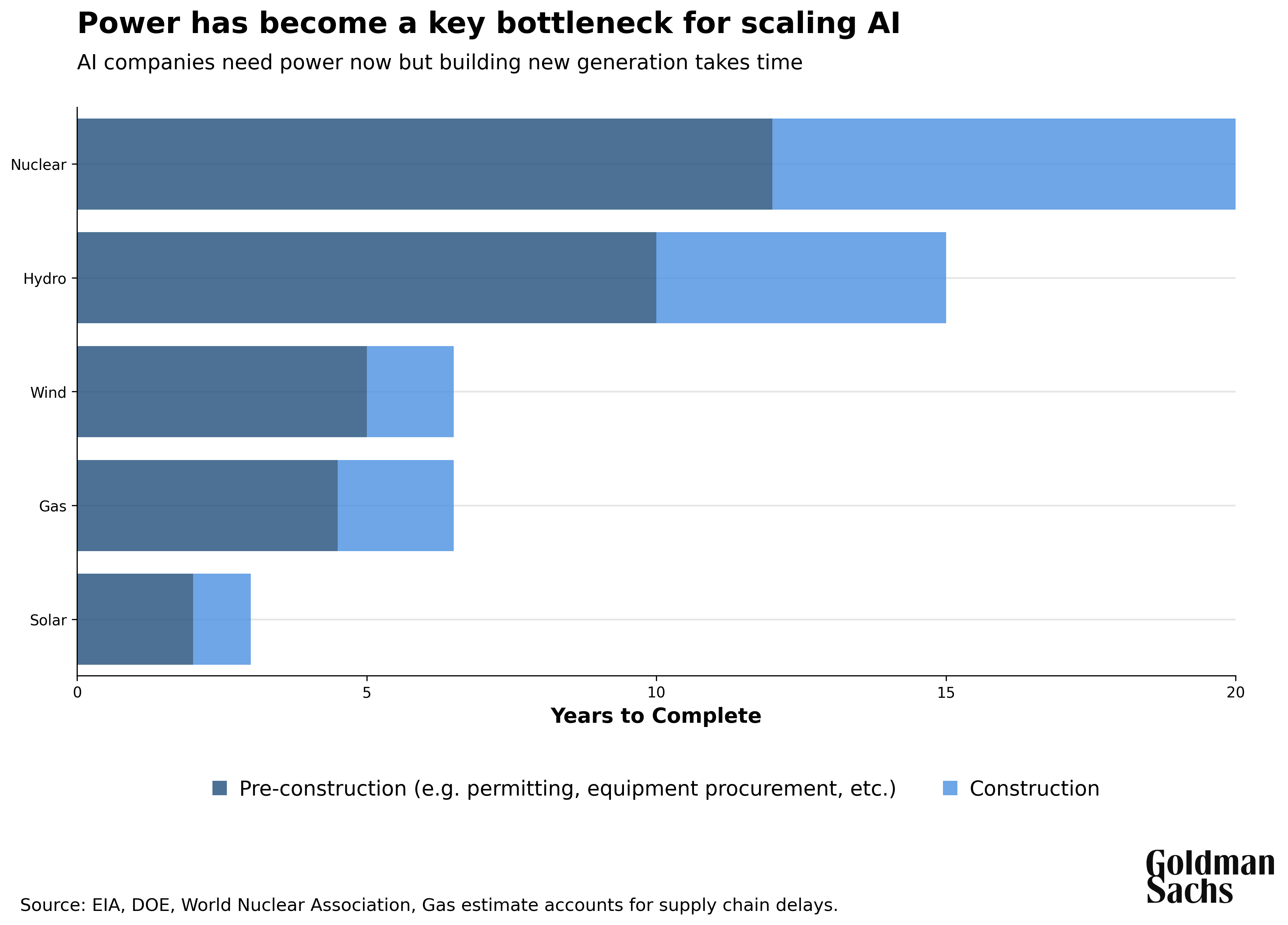

The AI revolution has triggered an unprecedented power demand surge. AI datacenters could drive 75 million American homes (100 GW) of incremental power demand around the world by 20301. This represents a sea change for the US power industry, which has grown accustomed to nearly zero growth for two decades, and where building additional capacity can take a decade.2 This mismatch between the existing power system and soaring AI demand could lead to a bottleneck, either creating unprecedented strain for the grid or holding back AI progress.

.png)

.png)

However, a fundamental shift in the nature of AI workloads could offer a bridge solution. AI training and inference can be paused and load-balanced to a much greater degree than most of the workloads that run in datacenters today. This flexibility opens the door to "curtailment programs," where datacenters run at full throttle for most of the year but are dialed back for a few hours at a time when the grid is under stress. Curtailment has enormous potential to unlock latent power, as the grid was never designed for average demand, but instead for when demand is highest, such as the hottest summer afternoon. Consequently, most hours of the year huge swaths of power generation and transmission sit idle. For tech companies, losing that final fraction of uptime is a small price to pay to unlock gigawatts of power available today in an industry where pace of execution is critical.

What if, thanks to curtailment, instead of overwhelming the grid, AI datacenters became the shock absorbers that finally unlocked this stranded capacity? Curtailment could drive higher utilization from existing power infrastructure in ways that create value that spills over to utilities and ratepayers alike. These more efficient usage patterns pioneered for AI datacenters could also reshape how we think about grid operations as a whole and evolve our grid to be more responsive and better prepared for intermittent power sources like solar and wind. The AI power challenge isn't just about keeping the lights on, but rather a generational stress test that forces us to reimagine how we use the grid itself.

Datacenter Uptime Assumptions Drove an AI Power Crisis

To appreciate just how revolutionary AI workloads are for grid planning, we must first understand the rigid requirements that have defined datacenter infrastructure for decades. Up to this point, the datacenter industry has been built with a central focus on maximizing uptime, or the share of total time the datacenter remains fully powered and operational. For datacenter developers, higher uptime translates directly to more reliable service for end users and the ability to charge tenants higher rates.

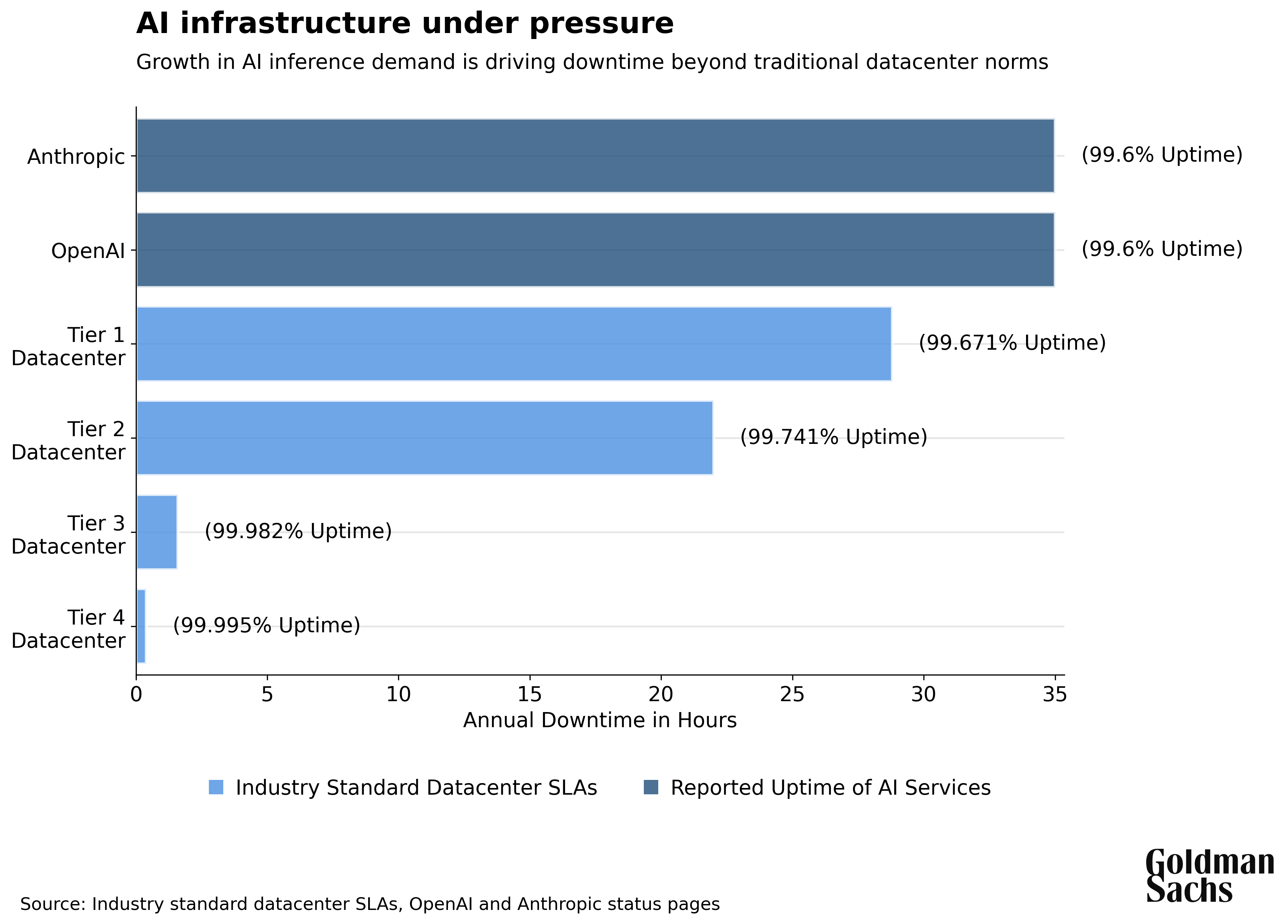

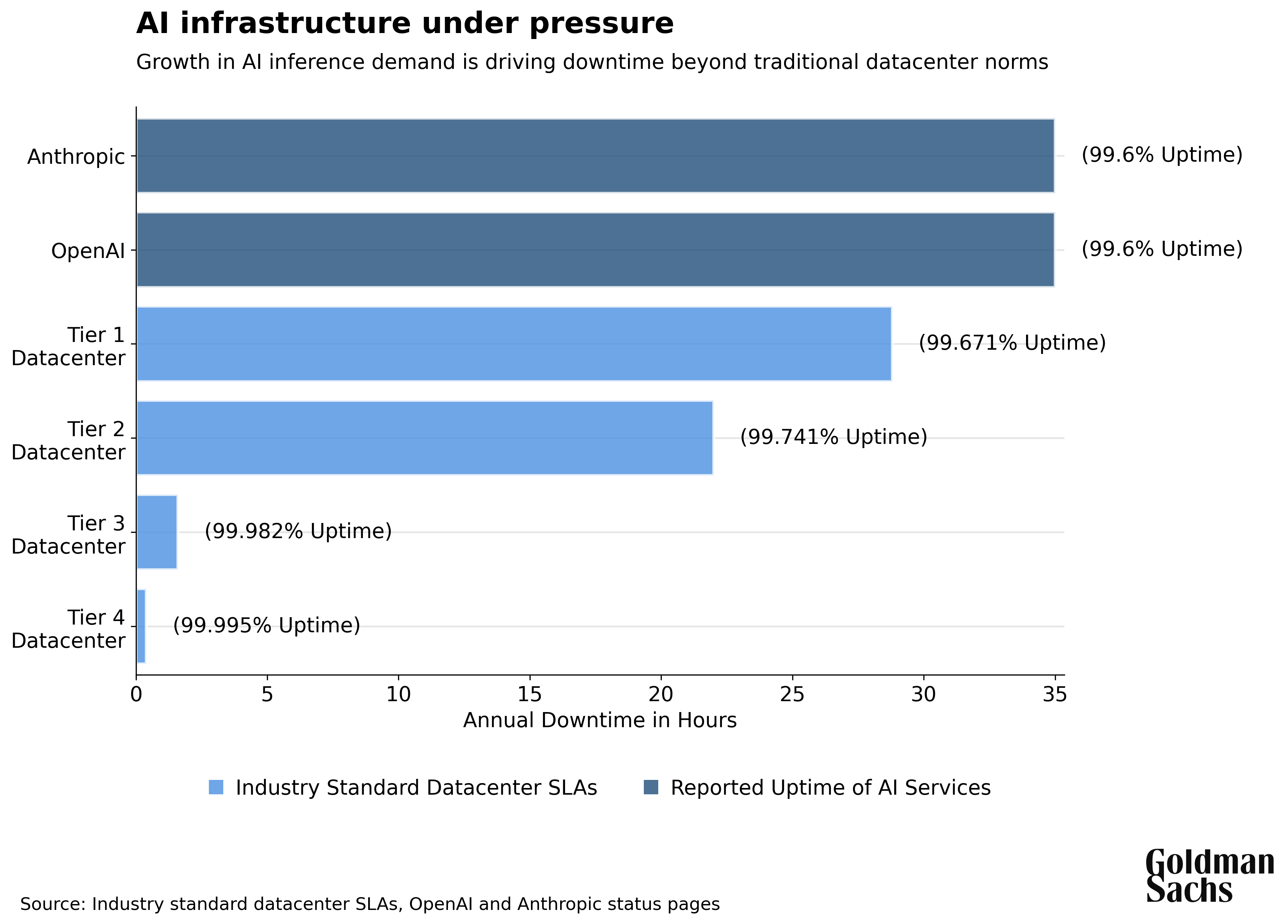

Datacenters are classified into tiers based on reliability, with higher tiers costing much more to build and therefore rent. The most common is Tier 3, which achieves 99.982% uptime (1.6 hours of downtime annually). But the industry's focus on reliability is illustrated by Tier 4 datacenters, which reach 99.995% uptime with only 26 minutes of downtime annually but cost twice as much to gain that final 0.013% of performance. Even the lowest-grade Tier 1 datacenters are expected to maintain 99.671% annual uptime.3 Developers wouldn't be building more capital-intensive Tier 4 data centers if customers didn't demand higher reliability. High-performance enterprise databases routinely add even more redundancy to achieve the vaunted "five nines" reliability (99.999%).4

Speed and Scale Trump Perfect Uptime in AI Infrastructure

The datacenter industry operates on the premises that computational workloads are inflexible and that providing incredibly reliable power and instant response times is paramount. These were reasonable assumptions until recently, as up until the AI boom, datacenters made up a relatively minor share of grid power demand, and overall demand was flat or declining.

That operating model is now out of date. AI computing is a regime change from traditional cloud computing, as scale and speed to market now matter far more than uptime. Time to market is critical for AI companies because faster power deployment creates a powerful flywheel effect. Faster power creates a virtuous cycle: It allows for faster scaling for AI infrastructure, which in turn allows for quicker deployment of new models, which then generates more usage and data, which fuel the next generation of AI models.

This cycle makes speed one of the ultimate competitive advantages in AI. Competitive advantage in AI comes from deploying the largest models fastest, not from achieving 99.995% versus 99.671% uptime (the gap between the most reliable datacenters and the simplest). Ultra-high reliability has taken a backseat to scale. Perhaps the clearest illustration is the fact that today’s AI services are already operating at uptime levels comparable to the lowest tier of traditional datacenter infrastructure, in the face of surging demand for the latest and greatest models.5,6

The Flexibility Revolution: How AI Enables Curtailment

This new model highlights a key feature of AI compute that, if thoughtfully leveraged, can alleviate growing pressure on the power grid. AI workloads are surprisingly flexible in terms of when and where they are executed, which can mitigate the challenge posed by losing that final percentage point of uptime. AI training—the process of "teaching" models by processing vast datasets—can pause and resume using "checkpoints," much like how you can save a document draft before closing your computer, before returning to it later.7 This means that during planned downtime, training can pause and resume when power returns or be redirected to another datacenter.

LLM (Large Language Model) inference—the process of running the AI model to generate responses for users—is also highly flexible relative to traditional cloud workloads. This is because AI inference is so computationally intensive, relative to traditional workloads like loading a webpage, that inference is constrained by response generation time rather than network latency.

It is difficult to state the importance of this shift. Traditional web applications have conditioned users to expect lightning-fast responses. Studies from leading tech companies show that a mere 0.1-second improvement in load time drives 8-10% increases in conversions for retail sites. As page load times stretch from 1 to 3 seconds, bounce probability jumps 32%. At 10 seconds, it skyrockets 123%. This millisecond obsession has shaped digital infrastructure since the dawn of the internet.9

ChatGPT, for example, can take roughly 20 seconds to generate a response roughly the length of this section of this paper.10 To put this into perspective, consider a user in New York who is querying a datacenter in Tokyo, where network latency—the time it takes signals to travel between the two locations—is ~170 milliseconds each way.11 This delay would be incredibly damaging for traditional web applications (e.g. >10% drop in retail sales conversions!) but is immaterial for AI. This tolerance means AI applications can load balance across continents in ways that were impossible in the millisecond-obsessed web era, allowing companies to access cheaper power and grid capacity wherever it's available without sacrificing user experience.

The flexibility of AI inference workloads becomes even more pronounced with agentic AI, which are systems that perform multi-step tasks through multiple prompts and reasoning cycles. These agents can often run for tens of minutes on complex research12, coding, or analytical tasks13. When computation time stretches this long, even 100 trips from New York to Tokyo (about 17 seconds total) becomes minor compared to the aggregate time it takes for users to receive their final reply.

The rise of AI agents is fundamentally rewiring users’ expectations around a new class of "set it and forget it" tasks. While traditional web applications demand constant real-time attention and near immediate responses, like juggling, AI tasks operate more like plate spinning, where users can set multiple processes in motion simultaneously that will take several minutes to run, without needing to pay close and constant attention to each of them.

A Bridge Solution: Slack Capacity on the US Grid

The flexibility of AI workloads enables two breakthroughs for the US energy system. First, when the energy grid reaches capacity, AI companies can pause certain workloads and temporarily reduce demand during peak periods. Second, AI datacenters can load balance across a much larger geographical space, routing computational work and power demand to wherever grid capacity is most available at any given moment.

The key question for power companies and infrastructure investors then isn’t just whether to build new power, but also how to bridge the gap until that power comes online. The US power grid is built for peak demand, such as the hottest day of summer when air conditioners strain the system, rather than average demand. This strategy means there is excess slack capacity most of the time. Therefore, the answer to getting 100 GW of power by 2030 may be hiding in plain sight: using existing slack capacity. Curtailment offers the possibility to bring substantial power online quickly while the lengthy process of building new infrastructure is also underway.14, 15, 16, 17, 18

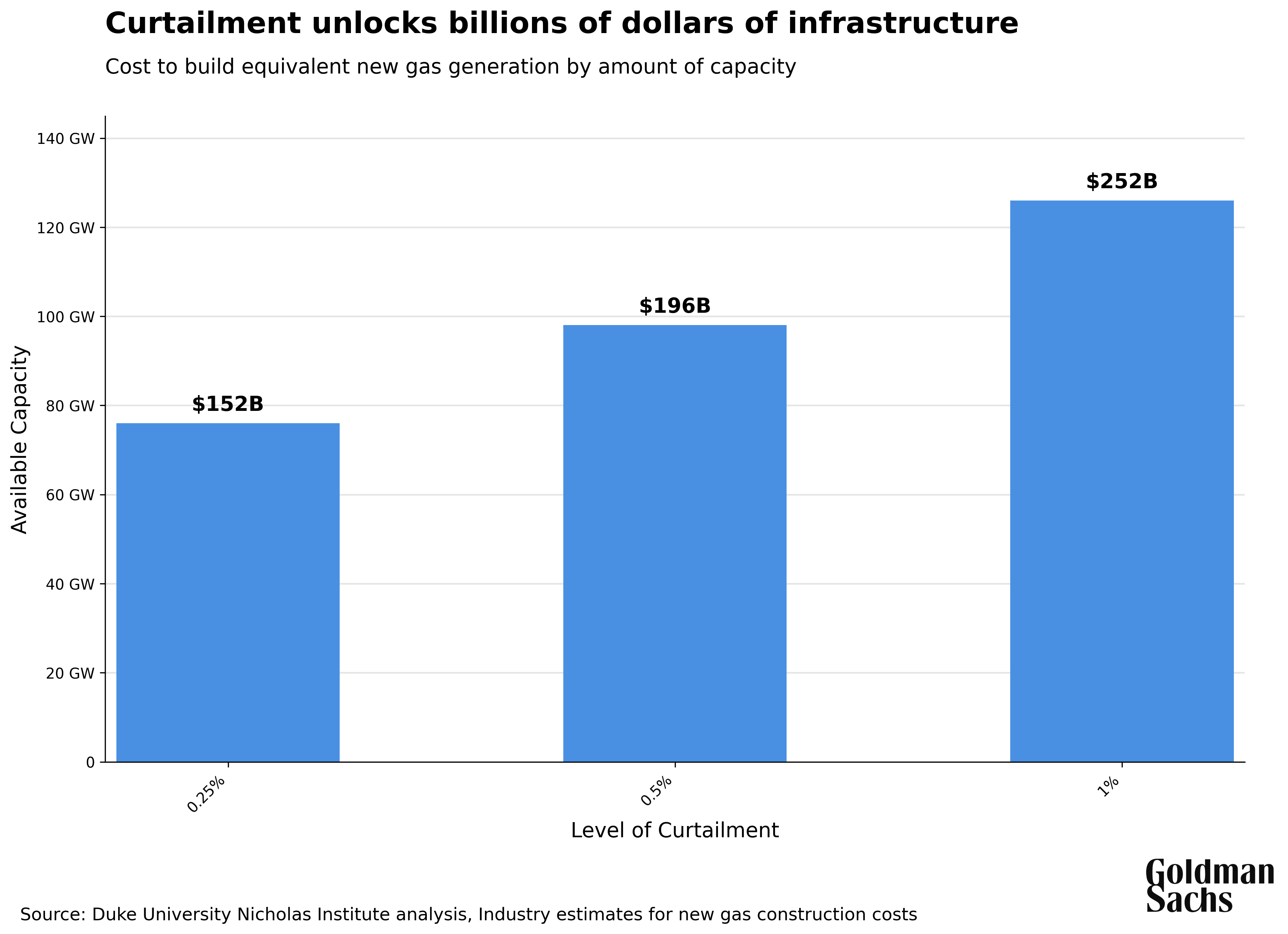

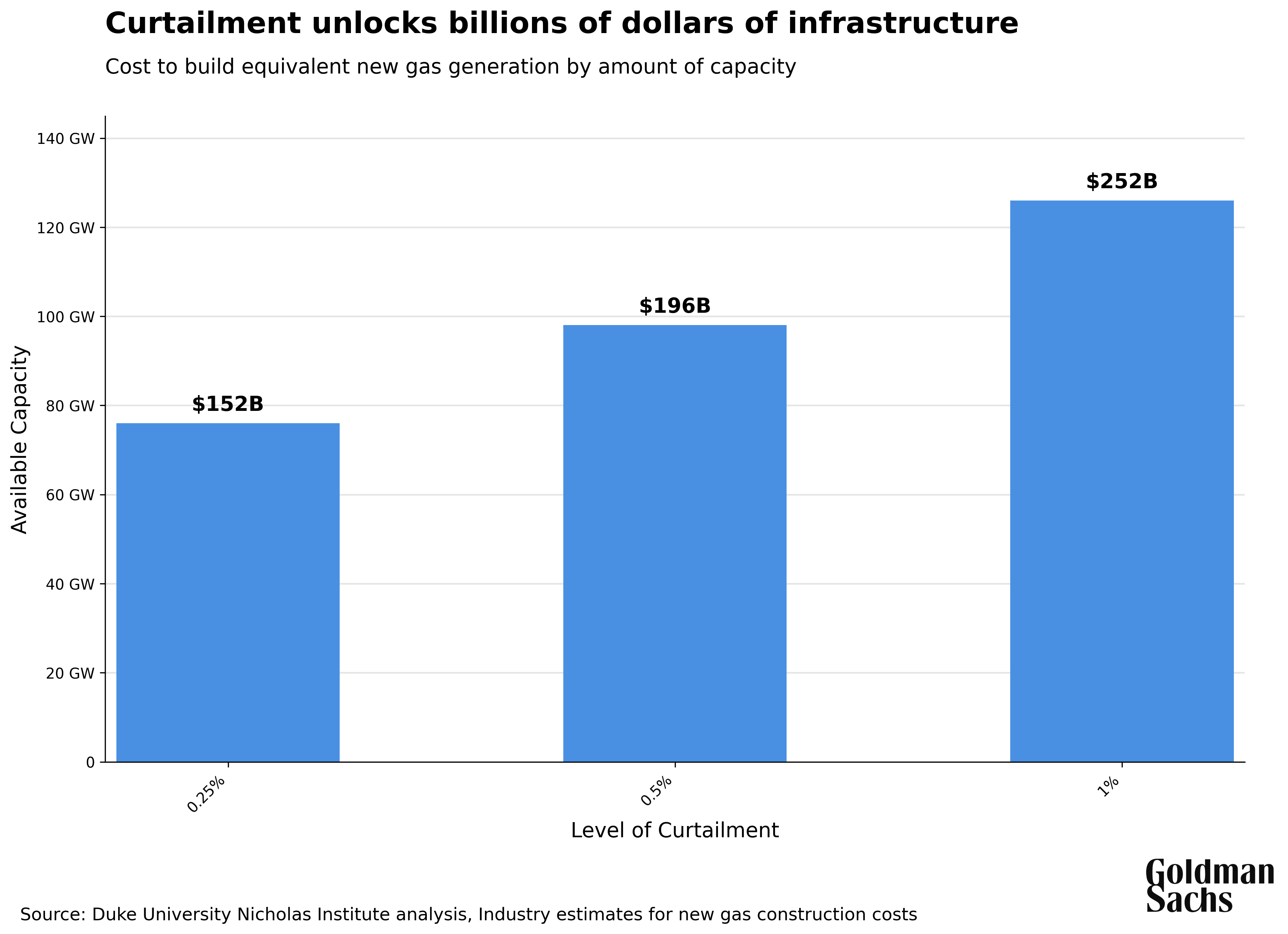

Recent analysis from Duke University's Nicholas Institute quantifies this opportunity: 76GW of new load capacity could become available at 99.75% uptime (0.25% curtailment), scaling to 126GW at 99% uptime (1% curtailment). According to this study, curtailment could add 10% to the nation's effective capacity without building new infrastructure. These curtailment events are typically short, averaging 1.7-2.5 hours, and retain high load levels, with 88% of curtailment hours maintaining at least 50% of normal capacity.19 By participating in curtailment programs, AI companies may not need to wait for new energy infrastructure to be built for every incremental watt of datacenter demand.

The Upside: Quick Power, Lower Cost, Stronger Grid

The expedited time to market enabled by curtailment has significant appeal in a world where grid interconnection backlogs have stretched from under two years for projects built between 2000 and 2007 to over four years for those built between 2018 and 2023, to now more than ten years.20 For tech companies, even building dedicated collocated power infrastructure for AI datacenters, and avoiding the complexity of the grid altogether, can't compare in terms of speed to participating in curtailment, because major gas turbine manufacturers now have backlogs stretching out to 2029 or later.21

The economics of curtailment are compelling as well. If this process can unlock 100 GW of capacity (as projected by the Duke University study), at an assumed cost of construction of $1500/kW, that would represent approximately $150 billion of additional power infrastructure to be leveraged. Analysis of data from the Environmental Protection Agency shows multiple power plants across the country with 100MW of capacity utilizable through curtailment programs, each representing over $150 million worth of infrastructure.

The benefits of curtailment extend beyond AI companies to utilities, ratepayers, and infrastructure investors as well, given that it can address a fundamental economic challenge: low grid utilization. Most grid infrastructure operates at roughly 53% of its capacity,24 meaning billions in fixed assets sit idle. However, flexible AI workloads allow utilities to amortize these fixed costs over more load, reducing per-unit costs for all ratepayers without adding strain during peak periods. For power investors, this represents a massive opportunity to extract more value from generation and transmission assets by driving up revenue on the same fixed cost base. Curtailment presents a fundamentally different vision of AI's role in the energy system: not as a crisis for the grid but rather as a shock absorber.

Conclusion: Two Revolutions Collide

The prevailing narrative frames AI as an energy apocalypse that will overwhelm our electrical grid. We argue the opposite: AI datacenters can become grid assets, unlocking massive capacity currently constrained by outdated peak-demand planning. By aligning AI's computational flexibility with the grid's need for demand response, we can expand capacity immediately using power infrastructure that is already built and paid for.

In AI, speed to market creates decisive competitive advantages. Companies that deploy larger models faster gain user data that fuels the next generation of models, creating a virtuous cycle where early movers compound their lead. Meanwhile, geopolitical competition intensifies as China moves to expand its AI infrastructure without the same grid constraints facing the US.25 America cannot afford to let power limitations become the bottleneck that hands AI leadership to competitors.

We are witnessing a unique convergence that has created a window of opportunity to pursue curtailment to bridge the gap between datacenter demand and power supply. On the supply side, the US grid is changing just as fundamentally as the new AI workloads that sit on top of it. Intermittent renewables are replacing predictable fossil fuel power sources, making electricity generation inherently variable rather than dispatchable on demand. This supply-side revolution means the demand side must change too. Consumption patterns can no longer assume power is always available when needed. The energy system must evolve from subservient infrastructure where supply bends over backwards to meet whatever demand exists into a bidirectional system where both sides continuously optimize against each other.

AI workloads, with their unprecedented flexibility, represent a unique opportunity to adapt to this new reality. With over $300 billion of annual investment,26 AI infrastructure is providing the economic impetus that hasn't existed for decades to make curtailment participation viable at scale. Curtailment may sound like arcane grid terminology, but it's actually where two core revolutions of our lifetime collide: AI and renewable energy. For tech companies, private equity sponsors, infrastructure developers, and utilities, today's AI curtailment experiments have the potential to become tomorrow's template for a new energy paradigm that unlocks value from existing assets while accelerating both AI deployment and clean energy viability.

Additional Reading:

1 International Energy Agency, "AI is set to drive surging electricity demand from data centres," https://www.iea.org/news/ai-is-set-to-drive-surging-electricity-demand-from-data-centres-while-offering-the-potential-to-transform-how-the-energy-sector-works

2 Grid Strategies, “The Era of Flat Power Demand is Over,” https://gridstrategiesllc.com/wp-content/uploads/2023/12/National-Load-Growth-Report-2023.pdf

3 Hewlett Packard Enterprise, "What Are Data Center Tiers," https://www.hpe.com/us/en/what-is/data-center-tiers.html

4 Google Cloud, "Regional, dual-region, and multi-region configurations," https://cloud.google.com/spanner/docs/instance-configurations

5 OpenAI, "OpenAI Status API," https://status.openai.com/api/v2/status.json

6 Anthropic, "Claude Status API," https://status.anthropic.com/api/v2/status.json

7 Google Cloud, "Save early and often with multi-tier checkpointing to optimize large AI training jobs," https://cloud.google.com/blog/products/ai-machine-learning/using-multi-tier-checkpointing-for-large-ai-training-jobs

8 Google, "Milliseconds Make Millions Report," https://www.thinkwithgoogle.com/_qs/documents/9757/Milliseconds_Make_Millions_report_hQYAbZJ.pdf

9 Steven Levy, "In the Plex: How Google Thinks, Works, and Shapes Our Lives," https://books.google.com/booksid=V1u1f8sv3k8C&pg=PA186&lpg=PA186&dq="code+yellow"+google+steven+levy&source=bl&ots=BRoR5uchiB&sig=xcGTJUE_a0oLbCB_vdRKZkbnmPU&hl=en&sa=X&ei=pagLT7LfD43ViALkmNX8Aw&ved=0CCQQ6AEwAQ#v=onepage&q&f=false

10 Combined data from OpenAI Platform, "Tokenizer Tool," https://platform.openai.com/tokenizer, and LLM Utils, "OpenAI Status Tracker," https://openai-status.llm-utils.org/

11 Wonder Network, "Network Ping Testing - New York to Tokyo," https://wondernetwork.com/pings/

12 OpenAI, “Deep Research,” https://help.openai.com/en/articles/10500283-deep-research-faq

13 ArsTechnica, “New Claude 4 AI model refactored code for 7 hours straight,” https://arstechnica.com/ai/2025/05/anthropic-calls-new-claude-4-worlds-best-ai-coding-model/

14 World Nuclear Association, "Nuclear Power in the USA," https://world-nuclear.org/information-library/country-profiles/countries-t-z/usa-nuclear-power

15 US Department of Energy, "New Report Examines the U.S. Hydropower Permitting Process," https://www.energy.gov/eere/water/articles/new-report-examines-us-hydropower-permitting-process

16 US Energy Information Administration, "Solar, battery storage to lead new U.S. generating capacity additions in 2025," https://www.eia.gov/todayinenergy/detail.php?id=64586

17 US Energy Information Administration, "U.S. electric power sector reported fewer delays for new solar capacity projects in 2023," https://www.eia.gov/todayinenergy/detail.php?id=62003

18 Heatmap News, "The Natural Gas Turbine Crisis," https://heatmap.news/ideas/natural-gas-turbine-crisis

19 Duke University Nicholas Institute, "Rethinking Load Growth: Assessing the Potential for Integration of Large Flexible Loads in US Power Systems," https://nicholasinstitute.duke.edu/publications/rethinking-load-growth

20 Lawrence Berkeley National Laboratory, "Queues Report," https://emp.lbl.gov/queues

21 Heatmap News, "The Natural Gas Turbine Crisis", https://heatmap.news/ideas/natural-gas-turbine-crisis

22 US Energy Information Administration, "U.S. construction costs dropped for solar, wind, and natural gas-fired generators in 2021," https://www.eia.gov/todayinenergy/detail.php?id=60562

23 U.S. Environmental Protection Agency, "Clean Air Markets Program Data (CAMPD)," https://campd.epa.gov/

24 Duke University Nicholas Institute, "Rethinking Load Growth: Assessing the Potential for Integration of Large Flexible Loads in US Power Systems,"https://nicholasinstitute.duke.edu/publications/rethinking-load-growth

25 FT, “China is building 74% of all current solar and wind projects,” https://www.ft.com/content/e51744d9-e585-4622-91f9-e1f57c0269d9

26 CNBC, "Tech megacaps plan to spend more than $300 billion in 2025 as AI race intensifies,” https://www.cnbc.com/2025/02/08/tech-megacaps-to-spend-more-than-300-billion-in-2025-to-win-in-ai.html

DISCLAIMER

The opinions and views expressed in this document do not necessarily reflect the views of Goldman Sachs or its affiliates. This document has been prepared by Goldman Sachs Global Institute and is not a product of Goldman Sachs Global Investment Research. This document is for your information only and does not constitute a recommendation from any Goldman Sachs entity to the recipient. This document should not be copied, distributed, published, or reproduced, in whole or in part. This document does not purport to contain a comprehensive overview of Goldman Sachs’ products and offerings. This document should not be used as a basis for trading in the securities or loans of any companies named herein or for any other investment decision and does not constitute an offer to sell the securities or loans of the companies named herein or a solicitation of proxies or votes. Goldman Sachs is not providing any financial, economic, legal, investment, accounting, or tax advice through this document or to its recipient. Goldman Sachs has no obligation to provide any updates or changes to the information herein. Neither Goldman Sachs nor any of its affiliates makes any representation or warranty, express or implied, as to the accuracy or completeness of the statements or any information contained in this article and any liability therefore (including in respect of direct, indirect, or consequential loss or damage) is expressly disclaimed.

© 2025 Goldman Sachs. All rights reserved.

Subscribe to Briefings

Our weekly newsletter delivers the latest insights on economic forces shaping markets—from Goldman Sachs leaders, economists, and investors around the world.

By submitting this information, you agree that the information you are providing is subject to Goldman Sachs’ Privacy Policy and Terms of Use. You consent to receive our newsletter via email.